Truth and Choices: Computational v. Analytical formal models

How do we show a statement about politics is true? Analytic formal modelers suggest one way:

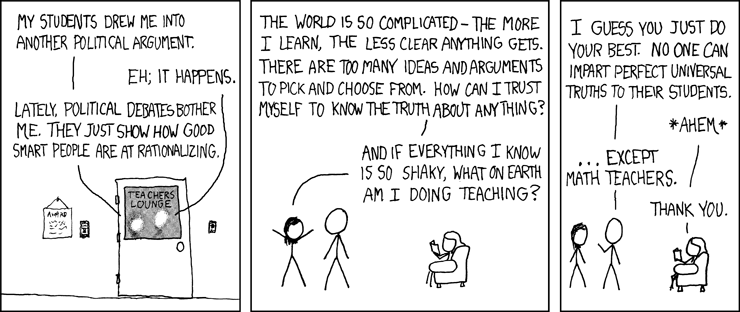

The way to be sure that a statement about politics is true is to make a formal argument: explicitly state the assumptions and use rigorous logic to create a 100% valid argument from assumptions to conclusions. Euclidian geometry was a popular touchstone of TruthTM for two thousand years…until Gauss proved that one of Euclid’s assumptions was arbitrary.

Oddly enough, most of the mathematicians I know do not assert that math reveals TruthTM. For a set of statements to be true, they must not disagree. Gödel’s showed us that we cannot be sure that math does not contradict itself. Accepting this means abandoning the claim that 100% truth can be generated analytically or using any logical system. For many mathematicians (not including probabilists or statisticians) once something is uncertain, all bets are off.

Analytic formal modelers know this, but assert that deduction (assumptions + logic => conclusion) is better than statistical induction (theory + data => conclusion). Let’s take a look at this analytically, shall we? Consider this statement that is true simply as an application of the definition of conditional probability:

Analytic modelers use a deductive chain of reasoning, which has a (conditional) probability of being true that approaches 1, so the probability of the conclusion being true is essentially the same as the the probability that the assumptions are true. One critique of this approach is that all assumptions, if pushed far enough, are false. However, that attitude underestimates the usefulness of analytic results. If the assumptions are close to being true and the chain of reasoning is “well-behaved” (robust to small errors in inputs) then the conclusions will be close to true.

Computational (including agent-based) modelers start with clear, explicit assumptions but use an inductive chain of reasoning which is therefore less likely to be conditionally true. However, the original assumptions are often much more realistic:

| Common analytic assumptions | Common computational assumptions |

| 1, 2, or infinitely many actors | 5, 50, 200 actors |

| static discounting | dynamic discounting |

| “Common knowledge” about the state of the world, ie a kind of omniscience | Local/limited knowledge |

If the assumptions made in a computational model are more likely to be true (or nearly true) and the chain of reasoning is strong, then the conclusions are more likely to be true than those “proven” using an analytic formal model.

Of course, that’s a tall order to fill. Your mileage may vary. Whether analytic or computational approaches is more appropriate depends on the specific assumptions being made and the strength of the induction used in the computational approach. This is a trade off that should be made mindfully, not dogmatically.